Understanding Modern Sanctions Name Screening Using Fincom As A Reference Architecture

- Chandrakant Maheshwari and Pranjal Dubey

- Mar 10

- 5 min read

Updated: Apr 3

Sanctions name screening is often discussed in abstract terms, yet many of its most consequential challenges are easiest to understand through concrete examples. Variations in spelling, language, script, and naming conventions regularly undermine otherwise well-intentioned screening controls. The result is often a combination of excessive alert volumes and missed matches that are only discovered after the fact.

This article examines these challenges using publicly observable behavior from the Office of Foreign Assets Control sanctions list search as a baseline, and a multilingual screening implementation from Fincom as a reference architecture.

The purpose is educational: to illustrate what modern sanctions screening systems must address, not to promote any single solution.

1. Baseline behavior: fuzzy name matching in public sanctions search tools

Public sanctions search tools, including the OFAC Sanctions List Search interface, provide transparency into how approximate name matching is commonly implemented. These tools typically allow users to adjust a similarity threshold to broaden or narrow results. In the example below, the name “Khaled Mashal” is searched using a commonly referenced fuzzy threshold.

Figure 1: OFAC Sanctions List Search Interface Showing Name Query and Threshold Slider

At a similarity threshold of approximately 72 percent, the search returns a very large number of results (over 170 in this example). Most of these results share only a partial name component such as “Khaled,” but are otherwise unrelated individuals.

This illustrates two structural characteristics of threshold-based fuzzy matching:

Lowering the threshold increases recall but rapidly expands the candidate set.

Analysts are required to manually review many irrelevant records to confirm that none correspond to the intended sanctioned individual.

From an operational perspective, this creates alert fatigue. From a risk perspective, it creates a more subtle problem: after reviewing a large result set and finding no relevant match, users may conclude that no sanctioned party exists, even when one does under a different spelling or representation.

2. Representation mismatch: when sanctioned names do not appear as expected

In this example, the intended sanctioned individual does exist on the SDN list, but under a spelling and ordering that differs materially from the input string. This mismatch is not unusual for names originating in non-Latin scripts, where multiple transliterations are equally valid.

Figure 2: Detailed OFAC Record Showing “MISHAAL, Khalid” with Associated Metadata

Here, the sanctioned name appears as “MISHAAL, Khalid,” with additional contextual information such as place of birth and program designation. The difficulty lies not in the absence of data, but in the gap between how the name is entered by the user and how it appears on the list.

This gap is a data representation problem, not an analyst error.

3. Native-script and cross-lingual comparison as an educational example

To illustrate how some modern screening systems address this challenge, the meeting included a demonstration of a multilingual comparison tool that evaluates names across scripts without requiring manual transliteration.

In this reference example, the Latin-script input “khaled mashal” is directly compared to the Arabic-script representation of the same name.

Figure 3: Cross-Script Name Comparison Interface Showing Latin and Arabic Inputs

The comparison output displays two separate measures:

A phonetic equivalence indicator showing that the names are acoustically aligned.

A distance score reflecting how closely the representations correspond after accounting for language and script differences.

The educational takeaway here is not the specific score values, but the principle: meaningful comparison can occur even when names are written in different scripts, provided the system is designed to account for linguistic structure rather than relying solely on string similarity.

4. Phonetics as a screening aid, not a conclusion

A recurring theme in the meeting discussion was that phonetic similarity alone is insufficient for sanctions screening. Phonetics can help identify candidate names that warrant review, but it must be supplemented by additional linguistic and structural logic.

In the reference architecture, phonetic processing serves as an initial filter. Subsequent steps incorporate:

Language-specific naming conventions

Dialectal variations

Name order and multi-part name handling

Distinctions between individual and organizational names

This layered approach reflects a practical reality of sanctions screening: no single metric can resolve name equivalence across all cultures and scripts.

5. Field-specific screening and operational controls

Another aspect highlighted in the discussion was the importance of treating different data fields differently. Names, addresses, free-text payment messages, country codes, and vessel or aircraft identifiers each exhibit distinct ambiguity patterns.

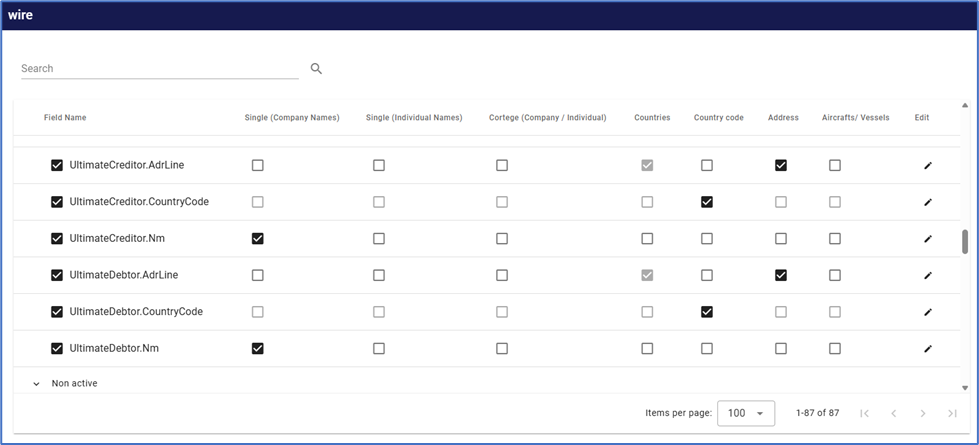

Figure 4: Administrative Configuration Screen Showing Field-Level Screening Controls

Separating screening logic by field allows institutions to reduce avoidable noise while maintaining appropriate coverage. For example:

Free-text fields may require exclusion logic for common transactional phrases.

Organization names often benefit from different handling than personal names.

Certain sanction programs may be relevant only to specific products or geographies.

This configurability supports alignment between screening behavior and institutional exposure without requiring structural changes to the underlying system.

6. Educational implications for sanctions screening programs

Viewed holistically, the examples discussed in the meeting illustrate several broader lessons that are relevant regardless of vendor or platform:

Sanctions screening challenges are driven primarily by name variability and representation differences.

Transliteration is not a neutral step and often introduces ambiguity.

Large fuzzy match result sets can conceal missed matches rather than prevent them.

Cross-lingual and cross-script handling is increasingly necessary as sanctions lists expand.

Effective screening depends on layered logic and field-specific controls rather than a single similarity score.

These are design considerations, not performance claims. Institutions evaluating or operating sanctions screening systems should focus on whether their controls explicitly address these issues, and whether screening outcomes can be understood and explained in practical terms.

Disclosure

This article is provided for educational purposes only. The authors have no compensation arrangement, financial relationship, or commercial interest in Fincom. References to the platform are used solely for illustrative purposes and do not constitute an endorsement. The views expressed are those of the authors and do not represent the views of any current or former employer or affiliated organization.

Meet The Authors

Chandrakant Maheshwari (pictured left) brings over 20 years of experience at the intersection of financial risk management, AML, and artificial intelligence. He has supported seven U.S. financial institutions in designing and operationalizing enterprise risk and AML model governance frameworks, translating regulatory expectations into scalable, real-world solutions. A published author and AI project lead, Chandrakant has developed practical GenAI tools including the AML Risk Tutor, SAR Narrative Enhancer, and an LLM-based Address Parser, focused on improving clarity, explainability, and regulatory confidence in compliance programs. He is driven by the belief that AI should not just automate risk, but make it more understandable.

Follow Chandrakant on LinkedIn: https://www.linkedin.com/in/chandrakant721/

Pranjal Dubey (pictured right) is a data scientist with eight years of experience delivering data-driven solutions that improve efficiency, accuracy, and decision-making across complex systems. Her expertise includes predictive modeling, regression analysis, and data mining, with a strong focus on transforming raw data into actionable insights. Pranjal specializes in building analytical solutions that solve real-world business problems, bridging advanced data science techniques with practical implementation.

Follow Pranjal on LinkedIn: https://www.linkedin.com/in/pranjal-dubey-a9656355/

Learn More About Fincom at: https://fincom.co/

Comments